Sunday Rundown #93: AI Image Boom & Bad Journey

Sunday Bonus #53: My custom "Logo Prompter" GPT.

Happy Sunday, friends!

Welcome back to the weekly look at generative AI that covers the following:

Sunday Rundown (free): this week’s AI news + a fun AI fail.

Sunday Bonus (paid): an exclusive segment for my paid subscribers.

Let’s get to it.

🗞️ AI news

Here are this week’s AI developments.

👩💻 AI releases

New stuff you can try right now:

Alibaba’s Qwen team released several new models:

QVQ-Max, a visual reasoning model that can analyze images, solve math problems, and more.

Qwen2.5-VL-32B, a smaller multimodal model that beats larger rivals in visual reasoning and math tasks.

Qwen2.5-Omni, a multimodal model that can see, hear, talk, and write during real-time interactions.

Anthropic introduced a "think" tool that lets developers trigger an additional reasoning step to help Claude better handle specific complex situations.

DeepSeek upgraded its base model to V3-0324, improving its performance on coding and reasoning benchmarks.

ElevenLabs introduced Actor Mode which lets creators use their own voice to guide the AI speech model’s reading of their script.

Google news:

The new Gemini 2.5 Pro is the company’s most intelligent model to date and outperforms other top reasoning models on most benchmarks. (Try it for free on Google AI Studio.)

Google Meet received nifty updates including AI-generated follow-up items, AI transcripts linked to relevant key video moments, and more.

Ideogram AI released Ideogram 3.0, convincingly outperforming all existing image models in human evaluations. (But it hasn’t yet been benchmarked against this week’s other AI image newcomers, Reve and 4o.)

Luma Labs added image-to-video capabilities to its Ray2 model, so you can animate starting images. (Luma suggests trying sketches and doodles.)

OpenAI news:

The new 4o image generation is a major paradigm shift in text-to-image AI (I explain why here). Watch the announcement livestream:

GPT-4o got a few under-the-hood updates and is now better at following instructions, being creative, and tackling complex coding tasks.

Advanced Voice Mode in ChatGPT now has a better personality and is less likely to interrupt you during conversation.

Perplexity now has answer tabs that let you filter for images, videos, shopping, jobs, and more:

Pika Labs added a fun effect that lets you record a selfie video with your younger self.

Reve AI launched Reve Image, a SOTA image model that tops leaderboards and is great at following instructions and rendering short text. (Try it for free.)

🔬 AI research

Cool stuff you might get to try one day:

Alibaba introduced LHM, a model that can turn one photo into an animatable 3D avatar in seconds. (Try the demo.)

Anthropic is rumored to soon expand Claude 3.7 Sonnet’s context window from 200K to 500K tokens.

Microsoft is gearing up to launch two AI agents—Researcher and Analyst—to help with work tasks in Microsoft 365 Copilot. (Expected in April.)

Midjourney is finally preparing to release the much-awaited V7 model. (It’s been over a year since V6 came out.)

📖 AI resources

Helpful AI tools and stuff that teaches you about AI:

“Anthropic Economic Index: Insights from Claude 3.7 Sonnet” [REPORT] - the second issue, tracking the impact of Claude 3.7 Sonnet launch.

“Tracing the thoughts of a large language model” [REPORT] - an exploration of Claude’s inner workings by Anthropic.

“Vibe Coding 101 with Replit” [VIDEO COURSE] - a free course by Replit’s President Michele Catasta and Head of Developer Relations Matt Palmer.

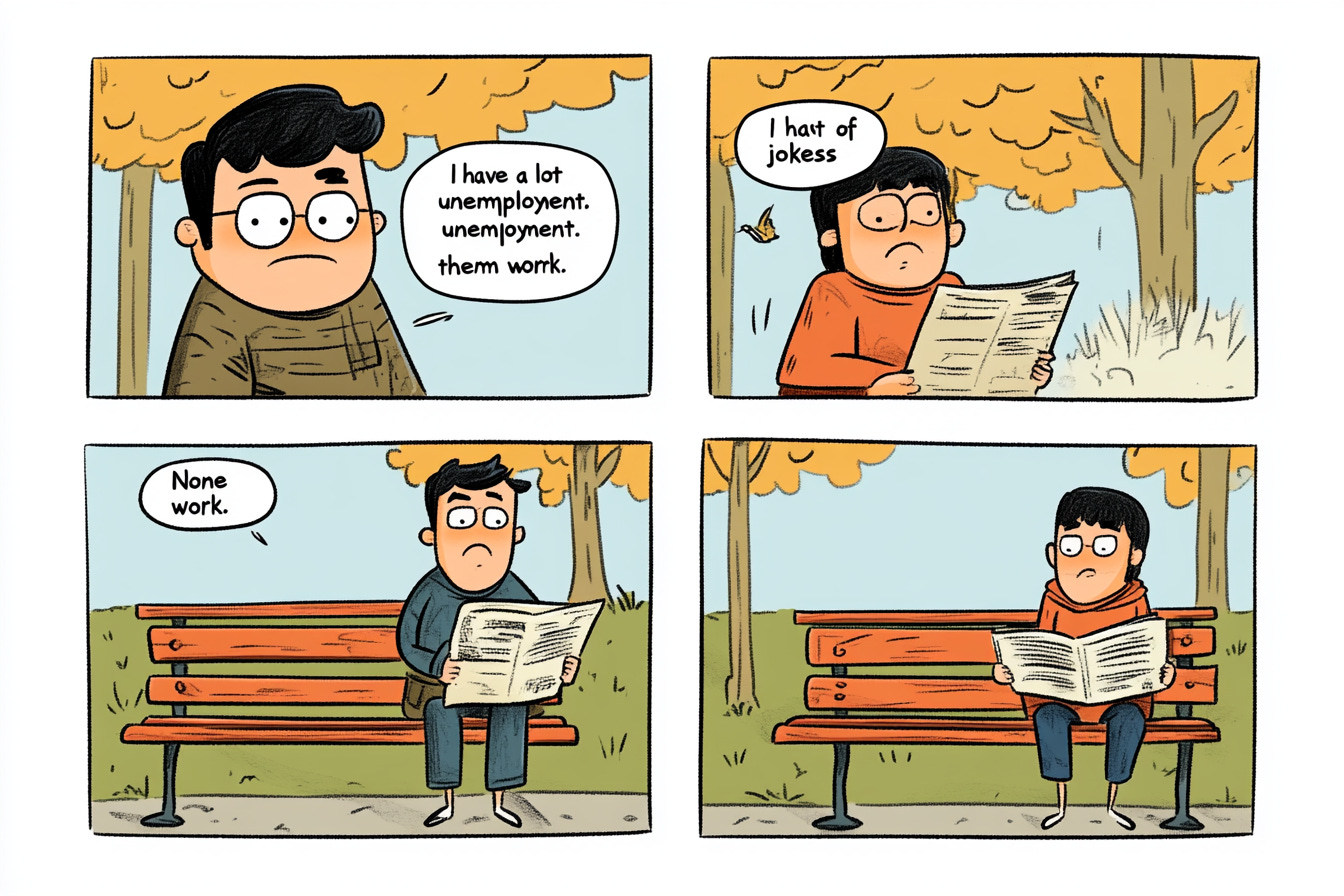

🤦♂️ AI fail of the week

Tried the prompt for this 4o cartoon in Midjourney. None of it worked.

💰 Sunday Bonus #53: Create killer logos with my “Logo Prompter” GPT

GPT-4o is now remarkably good at making images.

So good, in fact, that it can reliably translate highly detailed, precise prompts into perfectly matching visuals.

One ideal use case for this? Logo creation.

(Yup, we’ve come a long way from my first-ever Sunday Showdown about logos.)

But creating an effective logo requires a thorough understanding of what it should communicate, your brand guidelines, desired visual style, and many other criteria. That’s not always an obvious process.

Which is why I made the “Logo Prompter” GPT.

It walks you step-by-step through your vision, asks for relevant materials, and then generates a detailed, context-rich logo prompt for 4o.

Note: I originally wanted to create a “Logo Crafter” GPT that would make the logo directly. But for now, custom GPTs still use DALL-E 3 instead of 4o as the image model. So there’s a copy-paste step at the end. (Don’t worry, the Logo Prompter talks you through it.) I’ll upgrade the GPT once 4o image capabilities are available.